Lumi

A multi-modal iOS app that uses voice-first interactions to help users learn the elements of photography

Timeline: 8 Weeks

Role: Product Design, UI/UX Design, Conversational Design

Tools: Adobe Illustrator, Figma, Adobe XD

Introduction

After immersing myself in the world of UX design for the past few years and recently graduating from UCLA with a degree in Cognitive Science + Linguistics, I couldn’t be more excited to explore the intersection of these two fields through Conversational Design. The advent of voice interactions has not only added another layer of complexity and functionality to user experiences, but has also opened the door to creating ones that are accessible as well.

In developing my first multi-modal project, my goal has been to learn the intricacies of juxtaposing intuitive GUIs with VUIs that enhance a user’s understanding. Lumi is a photography resource that bridges the gap between theoretical and applied learning, affording users the ability to tackle a new skill through hands-on interaction.

Lumi Clickable Prototype Demo

Phase I: Explore

Preliminary Research

Before I dove into creating voice experiences, I wanted to research the tools, concepts and best practices that would help me grasp the fundamentals of designing a VUI. I started with Cathy Pearl’s Designing Voice User Interfaces (and devoured it in one sitting) which gave me an overview of the entire process. I was surprised to see how many of the research techniques I’d been using in both product design and linguistics were common to VUI design, but also humbled by the nuance and error-handling measures imperative to create conversational experiences.

Some key concepts and takeaways I kept in mind when developing Lumi were:

Conversational User Interfaces - Interactions beyond 1 conversation turn (require contextual awareness, coreference, history)

Cooperative Principle - The fundamental principle of successful conversations defined by Grice’s 4 Maxims: Quantity, Quality, Relevance and Manner.

Conversationality - Acknowledgements, temporal markers, and feedback that aid in building trust between assistant and user

Confidence Intervals and Confirmations - How to let users know when they were understood/inform of errors

Setting User Expectations: While it’s possible to illuminate all the features and functionality through a GUI (navigations, visual onboarding), discoverability isn’t as intuitive in voice interaction which are limited by cognitive factors such as working memory. As a result, it’s important to educate your users along the way, with interactions informing them of possible utterances and features.

Error Handling - While GUIs and other forms of visual technology require acquired skills, conversation and language are innate. As a result, voice interactions require trust between the interface and the user, and violations of this trust are detrimental to the experience. A critical factor in determining the success of a conversational experience is how the assistant accounts for errors, and how to “degrade gracefully” in the event of one.

Initial Brainstorm

With a stronger grasp on the principles and goals of Conversational Design, I began brainstorming use cases for my first project. From keeping track of time-related tasks, to enhancing accessibility, to providing a new means of learning language, the possibilities for voice-driven apps seemed endless.

In the end, I decided to create a product that helped users learn a new skill, particularly one that requires both hands to be occupied. Here, voice interactions could serve as a tool to help increase the success and decrease the frustration that accompanies overcoming the learning curve associated with skill-learning.

As a photographer myself, I’ve experienced firsthand how inefficient the current methods of self-learning photography are. I would have to put the camera down in order to search up settings and concepts, the pick it back up, plug in the settings, then put it back down to troubleshoot the inevitable errors I’d make. When I spoke to several other photographers, we lamented about the learning curve while also acknowledging the importance of trial and error. Voice interactions seemed like an ideal solution that would ease some of the frustration while not taking away from interactivity.

A Mini Study in Methodology

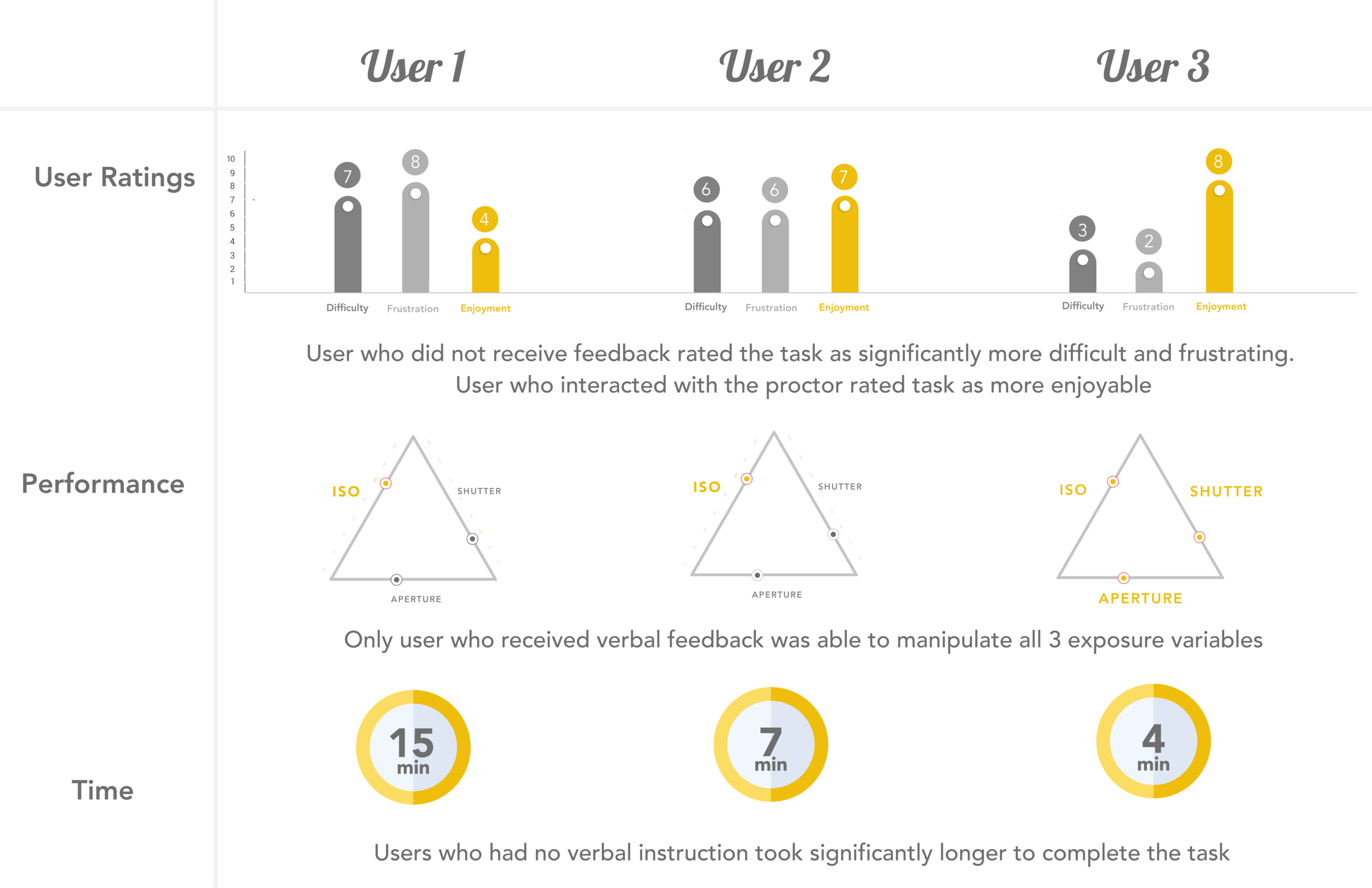

With my product idea in mind, I wanted to observe how users approached learning a new task. Was there a learning curve, and if so, how do different methods of learning affect that?

Experiment: I recruited three participants who had no experience with photography, and had never used a DSLR camera. They were given a camera in manual mode (no settings are filled automatically), and had to recreate a reference photo matching composition, depth of field and lighting. Each user received a different level of instruction to help them complete their task.

Results

I was surprised by how much the results followed my initial hypothesis. I had each participant complete a self-report survey detailing their emotional and objective experience with the task.

Users 1 and 2 both had lower ratings on the self-report measures of difficulty as well as higher frustration levels.

User 3 (full support) completed the task faster, took fewer shots, and was able to manipulate all three exposure factors. Furthermore, they rated task as pleasurable and were confident they could complete the task again with no assistance.

Cooperative Principle and Insights

I served as the proctor for Condition 3, and conversing with the participant helped me to become aware how our exchanges followed each of the 4 maxims of Grice’s Cooperative Principle. Operationalizing these principles based off of our conversation gave me some concrete interactions I could include when designing the VUI component of my prototype.

Phase II: Define

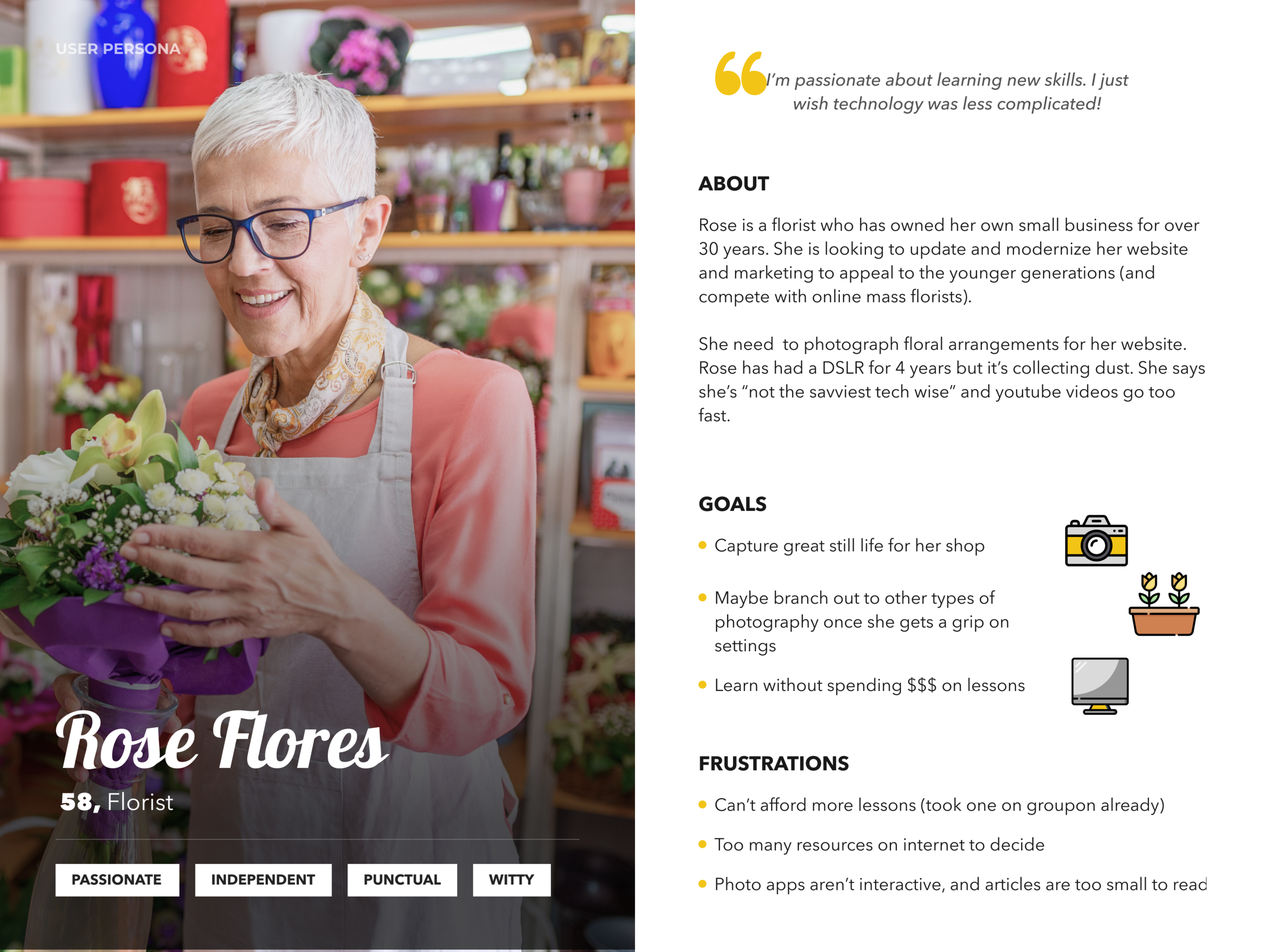

Personas

With a better understanding of pain points and learning methodologies, it was important to keep these salient throughout the development process. I created two personas with vastly different use cases but a shared goal of learning photography. While other products I’ve designed have targeted a specific age for their audience, to accomplish its goal, Lumi needed to be accessible to users in all walks of life.

Phase III: Develop

Features and Core Flow

After crystallizing the problem statement, I began to ideate on features that would be critical to resolving the pain points users were currently experiencing. I was able to distill the main functionality of the app to 4 distinct features that would help users learn the basics of the exposure triangle: the fundamental principle of lighting in photography.

Exposure triangle with voice - main page where users could learn through interactive, voice-first workshops

Negatives - catalogue of past workshops that stores transcript and settings

Instances of each workshop - each with settings and further details pertaining to photo type, lighting situation, trials and successes

Info Pages - Pages to learn more about each of the 3 topics: Aperture, ISO, and Shutter Speed

Sample Dialogues

With the MVP features decided, the first step to developing voice interactions was to lay out some conversation paths detailing how user’s would converse with the product. I drafted some sample dialogues between the user and Lumi to get an idea of where a VUI would be necessary and helpful.

In drafting these dialogues, it was important to cover not only the “blue sky” path where all conversation turns went according to plan, but also some error paths that would help me gain insight on how to handle situations that weren’t as smooth.

User Testing: Trying out the Sample Dialogues

To get feedback early on, I recruited some of my friends to test out the interactions I’d written. These table reads were a little cringeworthy at some points with lots of laughs and awkward pauses, but helped illuminate gaps in the conversations I’d written, as well as phrasing that sounded good on paper but not when spoken aloud.

Video: Table Read

Reflections

After the table read, there were a few problems that I needed to address.

Prompts and phrases needed to be shorter - while longer sentences could be skimmed on a written dialogue, spoken words need to be retained in working memory.

Eliminate unnecessary conversational fillers - while users appreciated temporal markers and some conversational elements, they found anecdotal details to be annoying, getting in the way of the task at hand

Action Steps at the End - if educational context is necessary, put the action step at the end so that it’s fresh in working memory and doesn’t need to be repeated (Miller’s Rule of 7!)

Wake Word and Timeouts - it’s annoying to keep using the wake word to address Lumi, but in some situations (like changing camera settings) it’s necessary. The table read helped highlight where in the flow a longer NSP timeout was imperative, and where the wake word could just be used to reinitiate conversation.

Flow Diagram

The flow diagram below maps out all possible paths a user could take through the app, with both GUI and VUI features included.

While complex (biggest flow chart I’ve ever made), creating this diagram was a great exercise to ensure the logic of the app was clear, with defined endpoints so users didn’t get stuck in an infinite loop!

clicker here for a larger view

Med-Fi Wireframes

Phase IV: Deliver

Features + High Fidelity Wireframes

The main workshop page is where all the interactivity happens. Users can begin a voice-first workshop that will guide them through a photo session, providing adaptive feedback and storing settings. Each node on the exposure triangle adjusts responsively to changes in settings, as well as the dialogue box beneath the triangle which updates important information with every conversation turn.

Main Workshop Page

The Negatives page catalogues the user’s interactions with both their camera and Lumi itself. Transcripts of each workshop are stored for easy access, as well as settings for each photo taken. Additionally, there is space for users to jot down notes and upload more photos within each instance.

Negatives

Info pages provide additional features specific to the user’s camera, as well as a deeper dive into each of the three components of the exposure triangle. Users can solidify their theoretical knowledge with greater success having practiced it themselves in workshops.

Info Pages

Adobe XD Prototype

The most fulfilling part of the process was prototyping Lumi in Adobe XD Voice. The prototype not only allowed to fine tune how the GUI and VUI features were integrated, but also gave me the opportunity to create and implement a wake word, and set parameters such as NSP Timeouts. Prototyping Lumi brought the project to life, and made user testing more realistic as well.

You can test out the XD prototype for yourself here

Lessons Learned + Further Steps

Designing a multi-modal application has definitely been the most challenging yet most fulfilling project I’ve undertaken. I’d initially planned on spending a few weeks drafting a product, but Lumi quickly turned into my longest and most detailed case study.

From the new toolset I needed to acquire to even begin thinking about voice interaction, to the challenge of simultaneously designing complementary GUIs and VUIs, Lumi has opened my eyes to the complexity of integrating multiple modes of interaction. However, this experience has also highlighted the universals of experience design, such as the importance of user testing and logical information architecture, that are common to the process of problem solving regardless of the final modality.

Personally, Lumi will always be close to my heart because it has given me the opportunity to combine my 3 passions, UX Design, Linguistics, and Photography to provide a solution to a problem that’s been largely unresolved. Hopefully in the future I can work with a Dev Team to ship Lumi’s MVP and make photography more accessible to all.